Communication is absolutely fundamental to human existence. The greatest medium for this we have is through spoken and written language. It’s difficult to determine when language was invented, although it was likely around 50000 – 150000 years ago; about the time of the beginning of homo sapiens. Since then, it has spread, diversified and evolved to reflect the changes in demographics and culture, and today there are roughly 6500 languages in the world, each coming with various dialects and accents.

While this is a marvelous development, in practice it can cause difficulty in communicating with your international peers. Travellers in the past might have used personal interpreters, and today we can still make use of phrases learned from a guide book, or even a full language dictionary. Yet, without making the effort to learn a meaningful amount of vocabulary and grammar yourself, for the most part you will probably be restricted to grunts, gestures and smiles as a means of performing basic communication. All this is changing however, with the rise in the use of automatic translators.

Perhaps the most widely known and used translation application, Google Translate, has become an essential tool for travellers, business people, and tourists alike. It has become advanced enough to handle complex sentences in several languages, and due to the power of machine learning, better at interpreting the context surrounding these phrases. This goes up to the point where whole documents can be translated in seconds, and with astounding accuracy. This is not to say there will not be mistakes, with any translation there usually are, but especially for the most popular languages, gone are the days where translation tools produce translations that look ridiculous to native speakers.

I remember as far back as 2014, with my Spanish teacher warning the class not to use Google Translate to help with our Spanish writing essays, insisting “I can always tell”. Safe to say she was proven wrong, and perhaps in hindsight she was probably just trying to call our bluff, but it shed a light on the power these tools have and the impact they would have on the future, along with the stark rise in accuracy from the years before when she likely could tell for real. Assisting lazy teenagers with their homework, though, however beneficial that may seem to them at the time, is a far cry from the most important uses of these tools.

There are currently hundreds of languages that are critically endangered, and there is a large motivation to keep these minor languages alive. On paper, everyone speaking the same language might seem easier, but in reality languages that die out take thousands of years of their unique features, history and culture with them. Often, monolingual speakers assume that other languages are basically just their language, but with different words, perhaps with a slight difference in order, and maybe with a different way of writing.

Yet, there are several language features from one language that do not exist or only partially exist in other languages, offering valuable insights to the context and environment they were developed in. Therefore, preserving these languages should certainly be seen as a priority. Translation tools can help with this by expanding their libraries to include these minority languages, allowing teachings from the past to seem less insignificant to non-native speakers and help people establish a more rounded view of the world.

Google’s ML Kit Translation API is showcased in the ML Kit quickstart sample on GitHub, which can be found here. This tutorial is focused on the code in Kotlin, although Java is provided as well. Note that this API is meant for simple, casual translations. The API documentation suggests that you use the Cloud Translation API if you require higher fidelity.

Translation on the VIA VAB-950

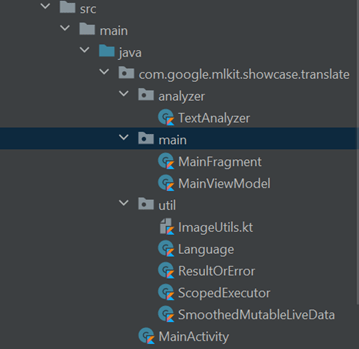

For this code, the classes are packaged into three packages: “analyzer”, “main” and “util”. The main class is in the same directory but packaged outside of these individual packages, see for yourself here:

The “util” package, as the name suggests, holds small utility classes. For example, “Languages” holds the language codes and the corresponding full language name, such as “en” with “English”.

The two main classes to look at are “TextAnalyzer” and “MainFragment”. The most important is the “MainFragment” class, which is where the image analysis is performed, along with initializing CameraX and binding the camera use cases. It also draws the image overlay, which is what the user will actually see (this is quite a large class).

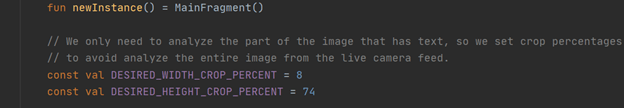

Firstly, some important attributes to note are the crop percentages. Since we only need to analyze the parts in the image that have text, we crop parts of the image out in order to avoid analyzing the entire image from the live camera feed.

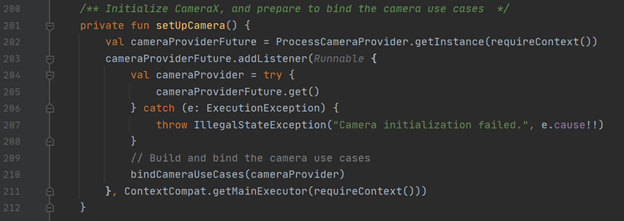

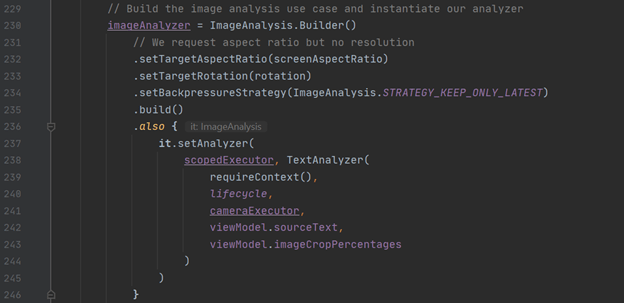

CameraX is then initialized in the private “setUpCamera” function, then “bindCameraUseCases” binds the use cases together, where the main “imageAnalyzer” object is declared.

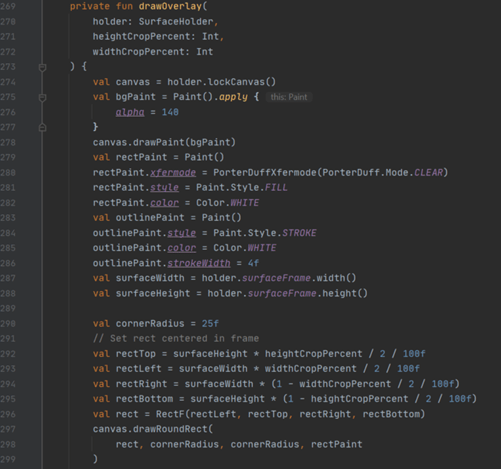

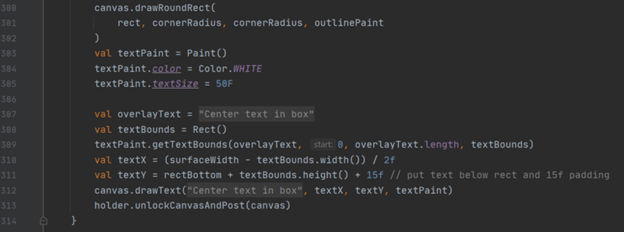

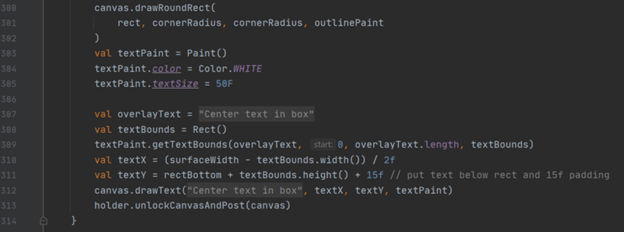

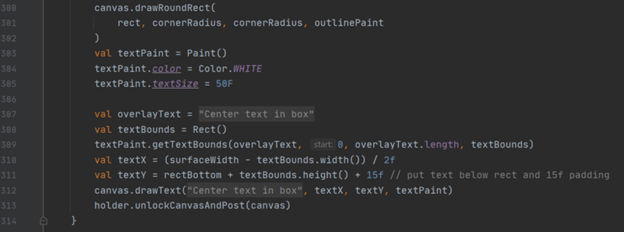

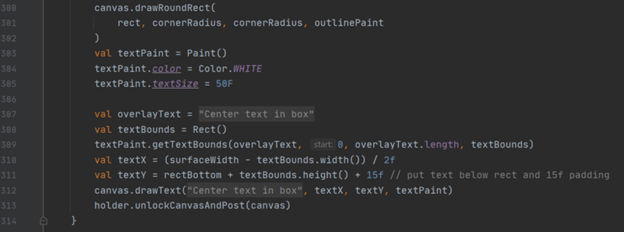

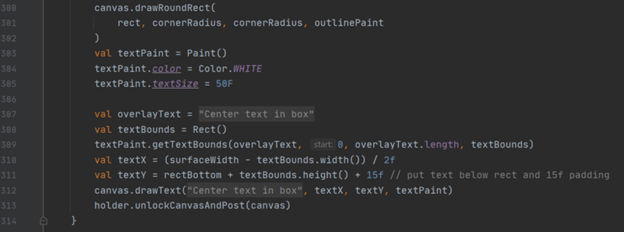

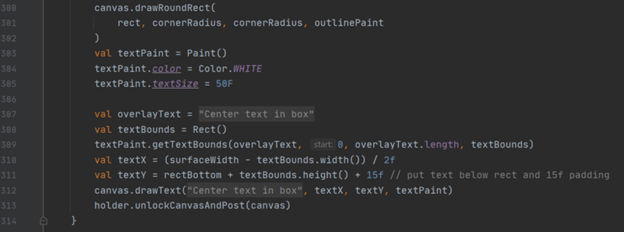

Finally, there is the “drawOverlay” function, which is where the rectangles are drawn to indicate to the user where to center the camera.

The “TextAnalyzer” class takes the frames passed in from the camera and returns any detected text within the cropped region we marked out before. The “analyze” function is where aspect ratios are calculated, manipulated and used to create bitmaps. However, the “recognizeTextOnDevice” is the most important function here, which, as the name suggests, passes the image into the ML Kit Vision API to detect text and returns whatever text it has detected.

That just about wraps everything up. Here’s how the app looks when running:

For more information on this specific API, visit Google’s page here. Also, visit our website’s blog section to find all the latest news and tutorials on the VIA VAB-950!