By now, discussing the prevalence of social media in modern society has become almost as ordinary as talking about the weather, and to some, about as tedious too. From mass data breaches and peddling misinformation to the rise in so-called “influencers” as role models for future generations portraying an (at best) arguable standard for self-worth and body image – there’s much to be delved into. Of course, traditional media and news outlets will always leap at the chance to illuminate every slight hiccup the huge social media companies make, and why not? Despite the aforementioned problems, it seems these companies are doing relatively little to combat these issues, and the few laws and regulations that do exist contribute little to curb their laissez-faire attitude.

Yet, it is easy to forget how social media has also driven advancement in a wide array of areas in society, most predominantly in AI. While it could be argued that AI in this area has been used for more unnerving practices, there are plenty of innocent innovations and mainstream uses to look at. Some of you may be familiar with the different ‘lenses’ on Snapchat that let you change features on your face, or even completely swap it with someone else’s, with startling accuracy. In Photoshop, AI has become a powerful tool for editing images, such as separating objects in the foreground of an image from the background, highlighting what is important.

This leads nicely on to the application we will be looking at today. ML Kit’s Selfie Segmentation API is showcased in the ML Kit Quickstart sample on GitHub, which can be found here. This is alongside the CameraX API, and the physical camera used is the MIPI CSI-2 Camera.

Selfie Segmentation on the VIA VAB-950

This tutorial is written in Java, but the code for Kotlin is also available in the same package. Also, please note this API is marked by Google as still being in a beta phase, so you may potentially encounter some problems, although personally these have been rare for me.

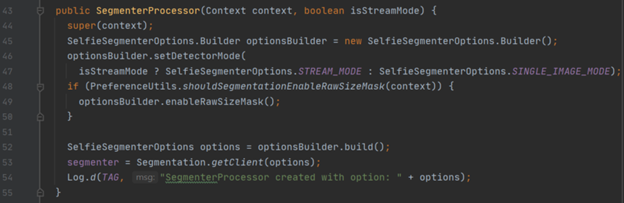

There are three main classes in this API that we need to look at, as they are fundamentally important to how the API works: “SegmenterProcessor”, “SegmentationGraphic” and “CameraXLivePreviewActivity”. The first, “SegementerProcessor”, is arguably the most important, as this is where the images are processed, and the “segmenter” object is instantiated. A lot of what happens in the class is done “under the hood”, which means that we can’t see it, but if you want to read more into what is happening, click the link here for an insightful blog on the inner workings of this API.

In this class, the “segmenter” object is first instantiated in the constructor, and this is also where the program checks if the raw size mask is enabled (this can be done in the settings part of the app).

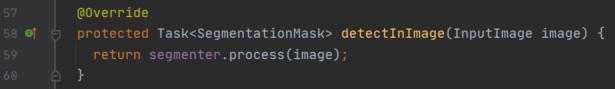

Next, the “detectInImage” method takes the input feed image by image and runs the “process” method on it each one (this is where the “under the hood” operations occur). The result of the “process” method is then returned.

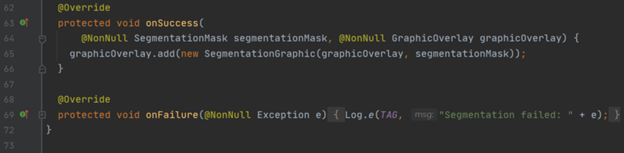

Finally, there are two more methods, “onSuccess” and “onFailure”. The “onSuccess” method, as the name suggests, runs when a process is successful, and then works with the graphic overlay to adjust the visual graphic that the user will see. “onFailure” is called when the image is unable to be processed, and returns an exception.

The next class is the “SegmentationGraphic” class. This class controls how the graphic overlays are drawn onto the live image being presented back to the user. Firstly, the constructor sets out the important class attributes, notably coordinates to scale the image and a Boolean to check if the raw size mask is enabled.

For more information on this specific API, visit Google’s page here. Also, visit our website’s Perspectives section to find all the latest news and tutorials on the VIA VAB-950!

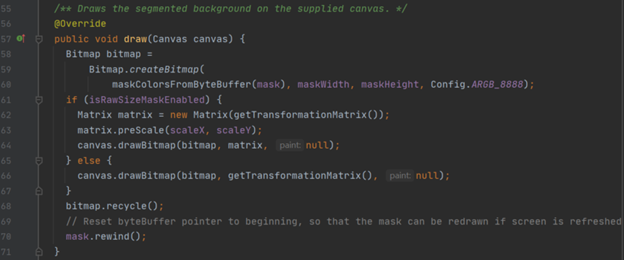

The main method in this class is the “draw” method, which takes a “Canvas” object supplied as its input. Here, a bitmap is created and drawn onto the canvas, taking into account whether the raw size mask is enabled.

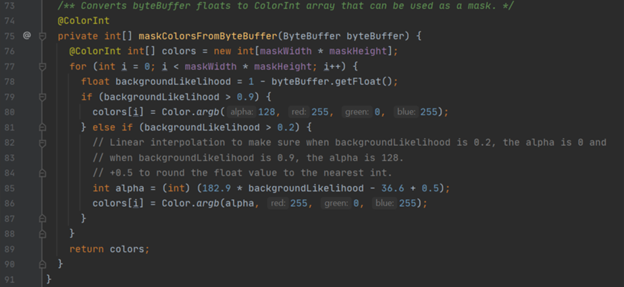

Notice in the code when the bitmap is created, there is a call to “maskColorsFromByteBuffer”. This is also a method within the “SegmentationOverlay” class:

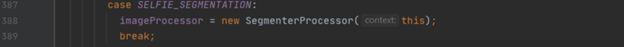

Finally, the “CameraXLivePReviewActivity” class is used to work with the CameraX API. You can read in more detail about this class in our face detection blog here, but essentially this class acts as the bridge between the live feed coming from the camera (in the case of the VIA VAB-950 this would be the MIPI CSI-2 camera), and the main logic of the program. Two main use cases are used within this class, the “bindPreviewUseCase” method, and the “bindAnalysisUseCase” method. The use case for selfie segmentation is relatively simple; an “imageProcessor” object is set to “SegmentorProcessor” object, the latter is instantiated when the “imageProcessor” is set.

With that, we have wrapped up just about all of the code! Here are some pictures of the application in use:

For more information on this specific API, visit Google’s page here. Also, visit our website’s Perspectives section to find all the latest news and tutorials on the VIA VAB-950!