In a previous blog here, I rewrote a tutorial focused on building an app that had the potential to identify objects. In that blog, the focus is on setting up the CameraX API, and building the application surrounding this. Whilst object identification is mentioned briefly, and we can load a custom tflite model, it does not go much further in depth. This will be the purpose of today’s blog.

Object detection and identification is becoming exponentially important within all industry sectors. As a major driving force for a new wave of automation, it also provides a backbone for a potential societal revolution, for instance, Japan’s Society 5.0. Already we have reached the point where many tasks that most people would only entrust to a human could be done better by AI, making a real difference. An example could be using AI cameras to identify potential wildfires, by installing round-the-clock cameras in towers spread around a forest which can identify flames or smoke and alert staff. In the past years, wildfires have progressively become a much larger problem, such as the 2019/20 bushfire season in Australia, resulting in a major tragedy.

Furthermore, object identification can also be used to speed up long, monotonous tasks without a forfeit in accuracy. An example we will be looking at in the tutorial today will be classifying birds. Environmentalists may want to survey a habitat with a camera over a long time period, so they could use AI to detect and classify birds (or any other animal) in real time to avoid having to go through footage manually. These abilities can be extended to any real-world logistics, from counting the number of fish in a certain pool, to identifying the type of wine on your dinner table, or the frequency of a certain car-make driving down a road. Google’s ML Kit API is able to detect and track images from a still or live image. It also enables us to implement our own custom classification models, although the ML Kit Quickstart app comes with some already.

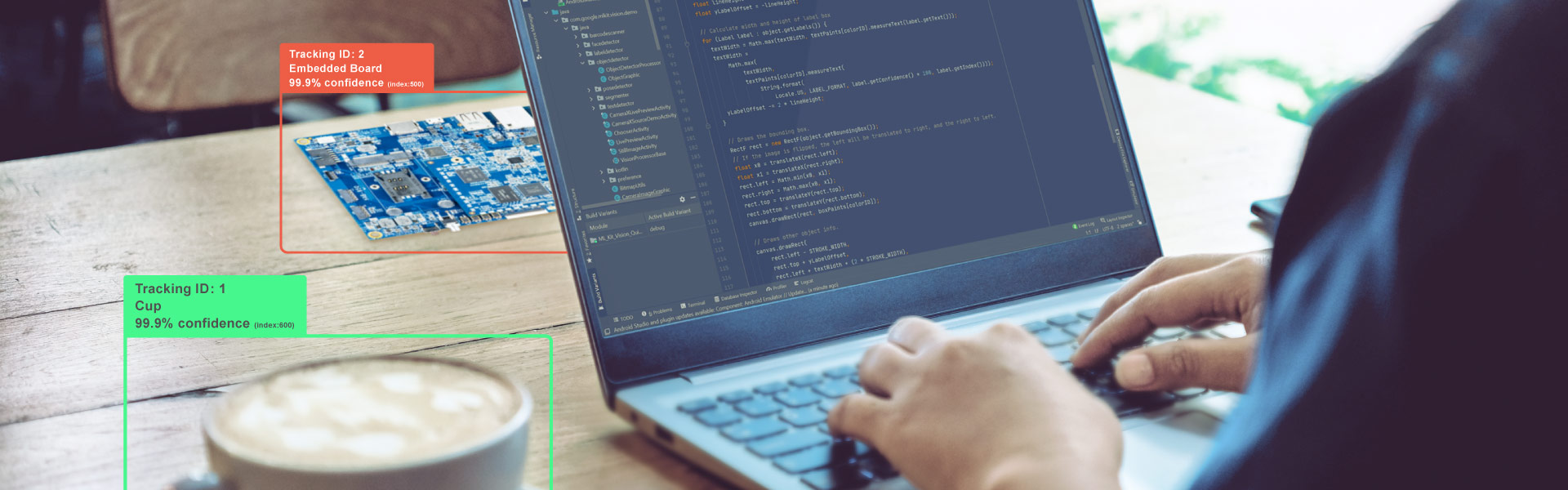

Object Identification on the VIA VAB-950

Aforementioned, object identification is implemented on the VIA VAB-950 using the ML Quickstart app. Check out the GitHub here in order to download it for yourself, or read our tutorial on installing Google’s ML Kit here. This is alongside the CameraX API, and the physical camera used is the MIPI CSI-2 Camera.

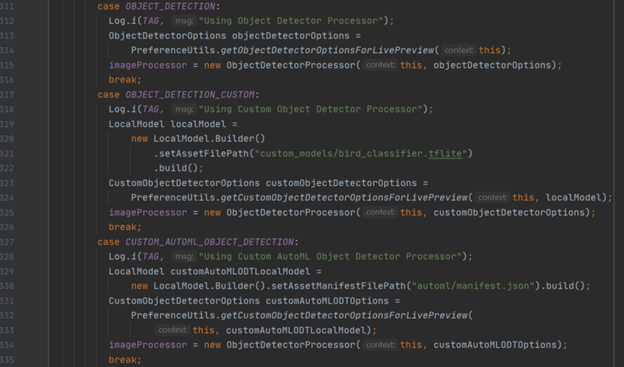

Following the pattern of our other tutorials, there are three main classes used within this API which are fundamentally important to how it works: “CameraXLivePreviewActivity”, “ObjectDetectorProcessor” and “ObjectGraphic”. You can read about the first one in more depth in our face detection blog here, but essentially this class acts as the bridge between the live feed coming from the MIPI CSI-2 Camera, and the main logic of the program. This is done using two use cases, within the methods of “bindAnalysisUseCase” and “bindPreviewUseCase”, and where a “VisionImageProcessor” is declared, as “imageProcessor”.

There are three use cases associated with object detection. The second one is where you can implement your own classifier as a tflite model, on line 321. In this example, it classifies birds. The two use case methods “bindAnalysisUseCase” and “bindPreviewUseCase” are then wrapped together by the “bindAllCameraUseCases” method.

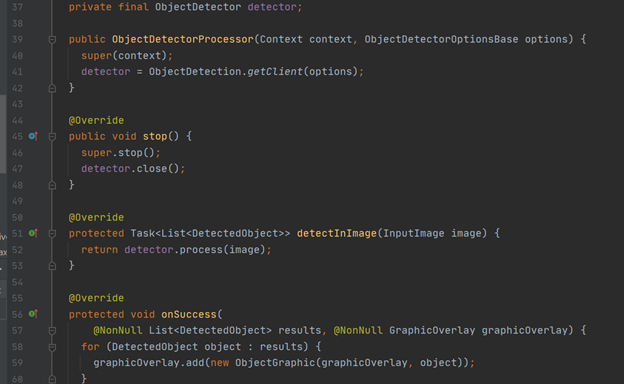

Again, arguably the most important (yet simplest) class is the “ObjectDetectorProcessor” class. This is where an “ObjectDetector” object is instantiated, and the “process” method is called, using the live image feed as input. When an object is detected, “onSuccess” is called which is where the graphic overlay is added, at the position of the detected object(s).

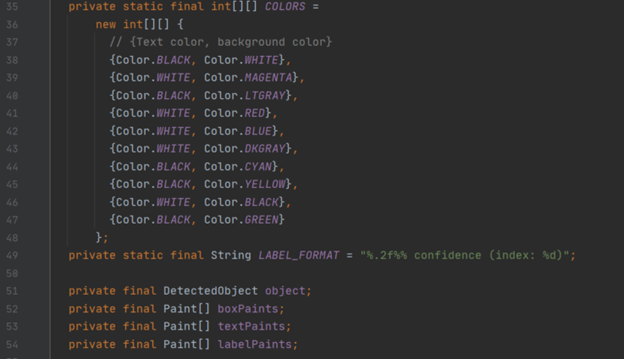

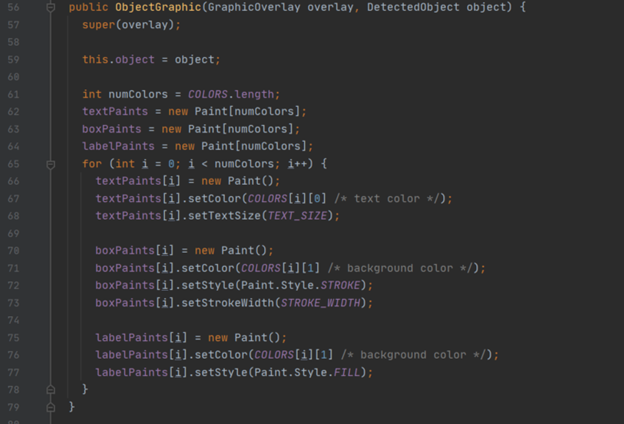

This leads nicely on to the final predominately used class, “ObjectGraphic”. This is what contains the methods for drawing boxes around detected objects and displaying what it believes the object to be in conjunction with the boxes (if you are using classification). When a graphic is created, attributes such as text size, width, colour etc are set up; for instance, the different colours for text and background are declared in a two-dimensional array. The colours are then looped through so each box and its corresponding text is a different colour:

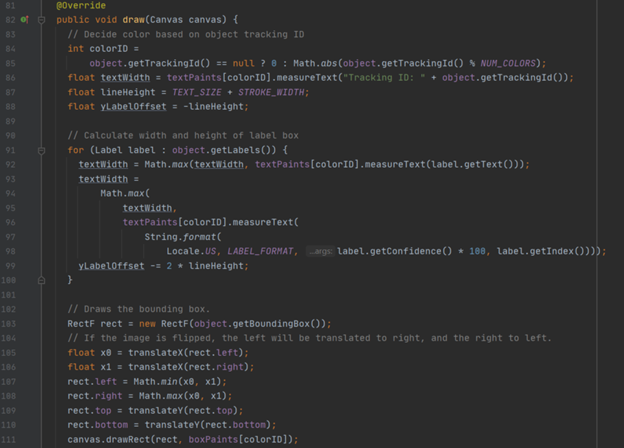

The main method is the “draw” method, which takes a Canvas object as input. Here, the height and width of the rectangular bounding boxes are calculated within the first for loop, then drawn.

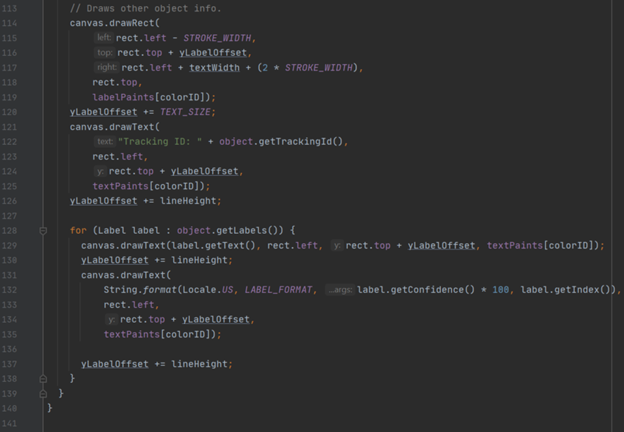

Other information about the object is then drawn, and the second for loop draws the other labels.

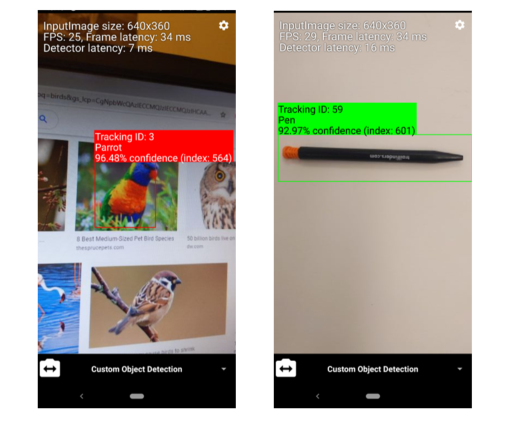

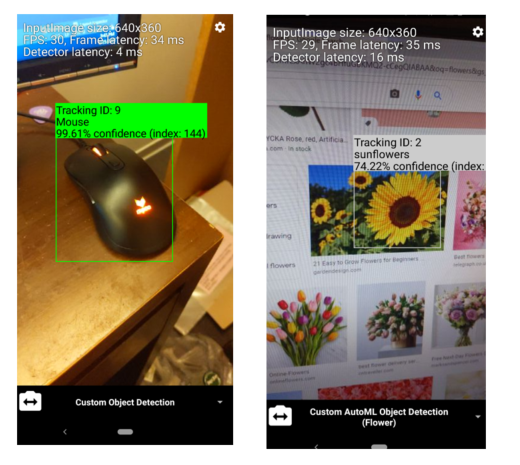

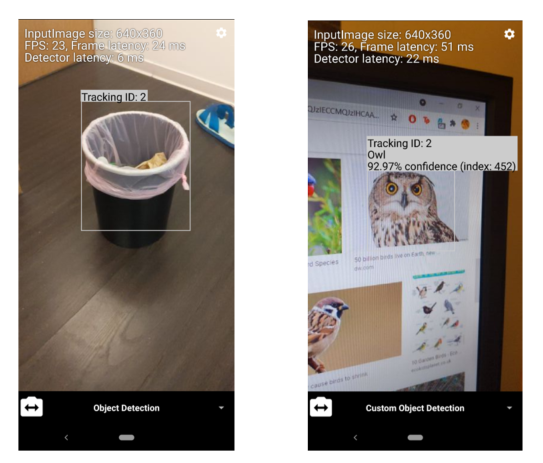

That’s about it! Here are some examples of the detectors in action:

For more information on this specific API, visit Google’s page here. Also, visit our website’s blog section to find all the latest news and tutorials on the VIA VAB-950!